The AiGILE Blog

Browse our latest articles

Want the AI insights that don't make the headlines?

BRAiKING NEWS lands in your inbox before the hype does.

Why Employee-Led AI Adoption Outperforms Top-Down Mandates

There are two ways to approach AI adoption in a mid-sized business. The first is to have leadership define the AI strategy, select the tools, develop the policies, and then communicate the change down through the organisation. The second is to actively involve employees in identifying AI opportunities, developing solutions, and driving adoption from within.

There are two ways to approach AI adoption in a mid-sized business. The first is to have leadership define the AI strategy, select the tools, develop the policies, and then communicate the change down through the organisation. The second is to actively involve employees in identifying AI opportunities, developing solutions, and driving adoption from within.

Both approaches have a role to play. But the evidence — and the experience of businesses that have done this well — strongly suggests that organisations which enable and harness employee-driven adoption achieve significantly better results than those that rely primarily on top-down mandates.

What is employee-led AI adoption?

Employee-led AI adoption doesn't mean leaving the workforce to do whatever they want with AI tools — that's a governance problem, not a strategy. It means creating the conditions where employees are empowered to identify AI opportunities in their own work, experiment within a governed framework, and champion AI adoption within their teams.

The most visible mechanism for employee-led adoption is the AI Champion programme — a network of trained, enthusiastic employees who act as peer coaches and advocates within their teams. But employee-led adoption is broader than a single programme. It's a cultural orientation that treats employees as active participants in AI adoption rather than passive recipients of it.

Why do top-down AI mandates so often fail?

Top-down AI mandates fail for the same reasons that most top-down mandates fail: they underestimate the complexity of changing how people actually work, and they overestimate what authority alone can achieve.

A mandate can require people to attend training. It can't make them learn. It can require people to use a tool. It can't make them use it effectively or enthusiastically. The difference between compliance and genuine adoption is motivation — and motivation is generated by understanding, agency, and relevance, none of which are features of a mandate.

The other limitation of purely top-down approaches is that leadership typically doesn't have granular visibility into where the highest-value AI opportunities exist in day-to-day operations. The employees doing the work have that visibility. A purely top-down approach systematically underuses this intelligence, often resulting in AI investments that are strategically logical but operationally suboptimal.

How do you create the conditions for employee-led AI adoption?

Creating the conditions for employee-led adoption involves four elements working together.

Psychological safety means employees need to feel safe to experiment, ask questions, and make mistakes without fear of negative consequences. In environments where failure is punished, people don't experiment — and AI adoption without experimentation is extremely slow.

A clear governance framework gives employees the parameters within which they can experiment. What tools are approved? What data can be used? What's the process for trying something new? Without this, well-intentioned employee experimentation risks generating the shadow AI problems discussed in a previous post.

Time and resources — even modest ones — signal that the organisation is serious about AI adoption and that employee engagement with it is valued, not merely tolerated. Dedicated time for learning and experimentation is one of the most effective investments a business can make.

Recognition and visibility for successful AI innovations creates social proof and momentum. When employees see their colleagues being recognised for finding smart ways to use AI, they're more likely to engage. This is the AI equivalent of the "bright spots" approach to change management — identifying and amplifying what's already working.

What is an AI Champion program and how does it work?

An AI Champion programme identifies and develops a network of employees who serve as the primary conduit for AI learning and adoption within their teams. Champions are typically selected based on genuine enthusiasm and peer credibility rather than hierarchy — someone their colleagues trust and turn to for advice.

Champions receive additional training that goes beyond the general workforce programme — deeper tool knowledge, facilitation skills, and an understanding of how to help colleagues work through challenges. They then operate as embedded resources within their teams: answering questions, sharing useful tips and use cases, facilitating peer learning, and providing feedback to the central AI adoption team about what's working and what isn't.

The leverage effect of a Champion programme is significant. A single well-trained Champion supporting a team of 15 people effectively multiplies the reach of the formal training investment many times over. Champions also build the kind of informal social proof that formal programmes can't replicate: "I saw Sarah using this to cut her monthly report from a half day to an hour" is more persuasive than any official communication.

Frequently Asked Questions

How do you prevent employee-led AI from becoming ungoverned shadow AI? The key is that employee-led adoption operates within a defined governance framework, not outside it. This means having a clear AI policy that defines approved tools and data use, a visible process for employees to propose and try new tools within sanctioned parameters, and a Champion network that reinforces governance norms alongside promoting adoption. Employee-led and well-governed are not in conflict — they require a framework that enables both.

Which employees make the best AI Champions? The best Champions are enthusiastic about AI (intrinsically, not just because they were asked), respected by their peers for competence rather than just hierarchy, communicative and patient, and genuinely interested in helping others rather than showcasing their own knowledge. Technical background helps but isn't essential — some of the most effective Champions are deeply functional users of AI tools with no formal technology background.

How do you measure the success of employee-led AI adoption? The primary metrics are adoption rate (what percentage of employees are actively using AI tools in their work), capability improvement (assessed before and after), and business impact (time saved, quality improvements, process efficiency gains). Secondary metrics include Champion programme engagement, training completion rates, and sentiment scores from regular pulse surveys. The combination of behavioural metrics (what people are actually doing) and outcome metrics (what it's delivering for the business) gives the fullest picture.

Not sure where your business stands with AI?

Find out your AiDOPTION Score — a free 10-minute diagnostic that measures your AI readiness across Strategy, Technology, and People. You'll get a personalised score and practical recommendations.

How to Talk to Your Team About AI Without Creating Fear or Resistance

The way you communicate about AI inside your organisation may matter more than any technology decision you make. Get the communication right and you create an environment where people are curious, engaged, and willing to learn. Get it wrong and you generate the kind of sustained, quiet resistance that can undermine even the most well-resourced AI adoption programme.

The way you communicate about AI inside your organisation may matter more than any technology decision you make. Get the communication right and you create an environment where people are curious, engaged, and willing to learn. Get it wrong, and you generate the kind of sustained, quiet resistance that can undermine even the most well-resourced AI adoption programme.

Internal AI communication is an area where the stakes are high, and the guidance is limited. Most of what's written about AI communication focuses on external messaging — customer-facing communication, PR. Internal communication is comparatively neglected, and the consequences of that show up in failed adoptions.

Why do employees resist AI adoption?

The most honest answer is: because they're not sure what it means for them. And in the absence of clear information, people fill the gap with the most available narrative — and the most available narrative about AI is overwhelmingly one of job displacement.

Research from Edelman's 2024 Trust Barometer found that 58% of workers are worried about AI's impact on their jobs. That anxiety doesn't disappear when a business starts adopting AI — it intensifies, particularly when the communication is unclear or absent. Employees who feel uncertain about their future are less willing to invest in learning new tools, less likely to engage positively with adoption programmes, and more likely to become passive resistance that slow down implementation.

Resistance is rarely dramatic. It shows up as: low engagement with training programmes, minimal use of new tools, passive non-compliance, and an undercurrent of negative conversation that spreads through informal networks faster than any official communication can counter it.

What should your internal AI communication cover?

Effective internal AI communication addresses four core questions that employees — consciously or not — are asking.

First: Why? Why is the business adopting AI? What problem does it solve? What opportunity does it create? Communication that leads with business rationale, explained plainly, gives employees context that makes everything that follows more meaningful.

Second: What? What specifically is changing? Which tools? Which processes? Which teams? Vague communication about "AI transformation" generates anxiety. Specific communication about "we're introducing an AI assistant to help the sales team with proposal drafting" is manageable and concrete.

Third: What does it mean for me? This is the question employees most want answered and the one most often left unanswered in internal communications. Directly addressing the job security question — honestly, not with empty reassurance — builds more trust than avoiding it.

Fourth: What do I need to do? Clear, specific guidance about what employees are expected to do in response to the change reduces uncertainty and provides a sense of agency. Uncertainty is stressful. Knowing what's expected and how to get there is manageable.

How do you time AI communications effectively?

Timing is one of the most underrated elements of change communication. Gartner research on technology adoption highlights a consistent pattern: communications that arrive too early (before people can take any action) generate anxiety; communications that arrive too late (after changes are already visible) generate distrust.

The right approach is sequenced communication that runs ahead of but in parallel with the adoption programme. Initial communications should come from senior leadership, explain the strategic rationale, and acknowledge the change that's coming without overwhelming people with detail. Middle-stage communications should become more specific as the adoption gets closer — what tools, what processes, what support is available. Launch communications should be practical and action-oriented — here's what you need to do, here's where to get help.

Post-launch communication is often forgotten but critically important. The period immediately after go-live is when most user uncertainty peaks, when confusion is highest, and when the risk of disengagement is greatest. Proactive, supportive communication in this period — acknowledging the challenges, celebrating early wins, directing people to help resources — makes a significant difference to adoption rates.

What communication mistakes cause the most damage during AI adoption?

Four mistakes consistently appear in failed AI adoption programmes.

Silence. The absence of communication doesn't reduce anxiety — it amplifies it. In the absence of official communication, informal networks fill the gap with speculation and rumour.

Overselling. Communications that promise transformational outcomes without acknowledging the effort and change required erode trust quickly when reality doesn't match the messaging.

One-way communication. Employees who feel heard are far more receptive to change than those who receive only broadcast messages. Building in feedback mechanisms — surveys, forums, manager conversations — is as important as the outbound communication.

Inconsistency. When leaders say different things, or when the official message doesn't match what employees see happening, credibility collapses. Ensuring consistency across senior leadership before any communication goes out is non-negotiable.

Frequently Asked Questions

Should leaders communicate about AI before or after the strategy is finalised? Some communication should happen before strategy is finalised — specifically to acknowledge that AI is being evaluated and that employees will be kept informed. The risk of waiting for a complete strategy is that employees hear rumours and fill the gap themselves. An early, honest "we're working on this and we'll keep you informed" is far better than silence followed by a fully formed announcement.

How do you address job loss fears specifically? Directly and honestly. Vague reassurance ("AI won't replace jobs") isn't believed and often backfires. A more effective approach is to be specific about the roles and tasks that will and won't be affected, to explain what the business is investing in to support people through the transition, and to acknowledge that some roles will change significantly. Acknowledging reality while demonstrating genuine commitment to employee support is far more trust-building than unconvincing blanket reassurance.

What format works best for AI communications — all-hands, email, or one-on-ones? All three, at different stages. All-hands or town hall formats work well for initial strategic announcements — they signal that this is important and give everyone the same information at the same time. Written communications (email, intranet) work well for detailed, reference-quality information that people can return to. Manager-to-team conversations are most effective for addressing individual concerns and questions — no one feels comfortable asking "will I lose my job?" in a company-wide forum. A multi-channel approach, sequenced appropriately, is almost always more effective than relying on a single format.

Not sure where your business stands with AI?

Find out your AiDOPTION Score — a free 10-minute diagnostic that measures your AI readiness across Strategy, Technology, and People. You'll get a personalised score and practical recommendations.

30 Years in Technology Taught Me This About AI: It's Different This Time

I've been working in technology since the early 1990s. I've lived through the commercialisation of the internet, the dot-com boom and bust, the arrival of mobile, the rise of cloud computing, social media, and a dozen other waves that were each described, at the time, as the most transformative technology shift in a generation.

I've been working in technology since the early 1990s. I've lived through the commercialisation of the internet, the dot-com boom and bust, the arrival of mobile, the rise of cloud computing, social media, and a dozen other waves that were each described, at the time, as the most transformative technology shift in a generation.

So when I tell you that AI is different — genuinely different, in ways that make most of what we've experienced before look like incremental change — I want you to understand that it's not something I say lightly. And I want to explain why, based on three decades of watching technology cycles, I believe that.

Why does every technology cycle feel different — and why this one actually is?

Every major technology wave comes with a version of the same claim: this changes everything. And in most cases, the claim is both true and overstated. The internet did change everything. It also took fifteen years to fully manifest in mainstream business practice, and the "everything" it changed was more narrowly defined than the early evangelists suggested.

The pattern I've observed across multiple cycles is consistent: initial excitement, early adoption by the technically bold, a reality check when the hard implementation challenges emerge, gradual maturation, and eventually mainstream adoption that is genuinely transformative but slower and less dramatic than the peak of the hype cycle suggested.

AI is following this pattern. But there are three things about this wave that make it substantively different from everything I've worked with before, and they have direct implications for how mid-sized businesses should be thinking about their response.

What have 30 years of technology change taught me about adoption?

The most consistent lesson from 30 years of digital product development is that technology succeeds when it solves real problems for real people and fails when it's deployed for its own sake. This sounds obvious, but it's violated constantly.

I've seen large organisations spend years and significant capital on technology implementations that never delivered meaningful business outcomes because the adoption question was never properly answered. The technology worked. The people didn't change. And a technology that nobody uses delivers no value regardless of its capability.

The second consistent lesson is that the human side of technology adoption is always the harder problem. Technical implementation, while complex, is typically more predictable than people change. The businesses that succeed with technology consistently invest disproportionately in change management, training, and culture — not just infrastructure and software.

The third lesson is that the organisations that build genuine capability — rather than buying a solution and assuming it will work — compound their advantage over time. The businesses I've seen succeed with every major technology wave are those that developed internal expertise, not just vendor relationships.

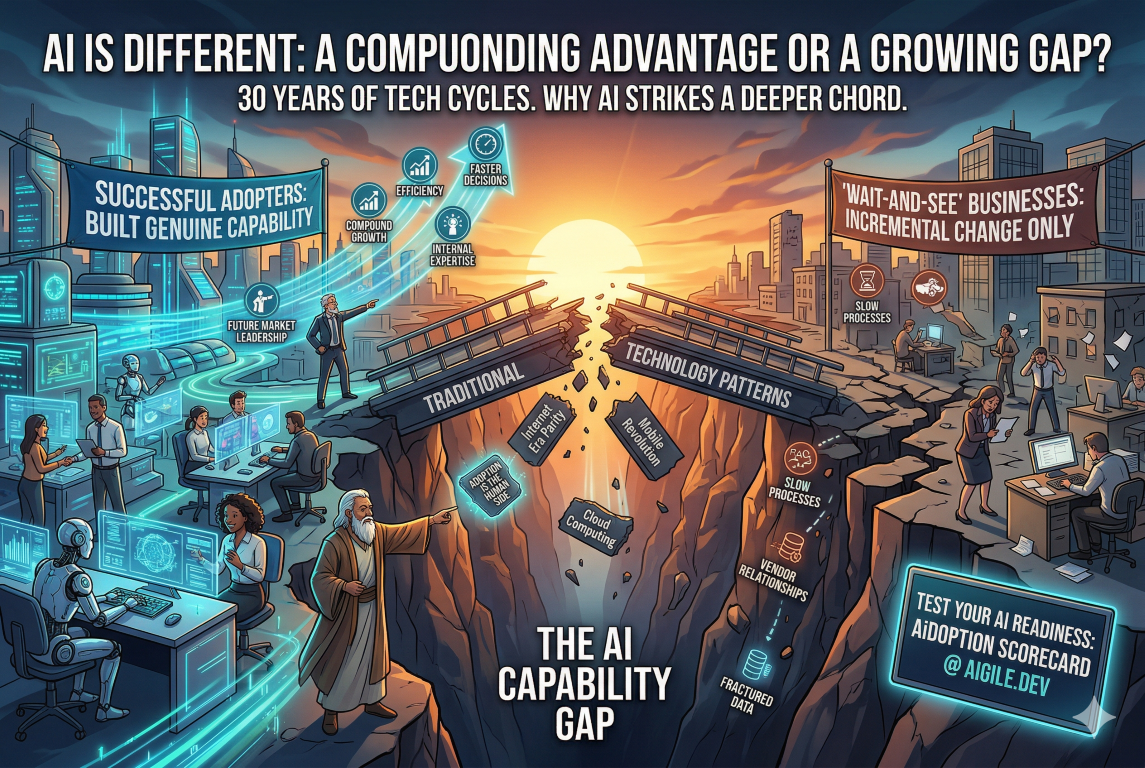

How is AI different from every previous technology wave?

Three things distinguish this wave from what came before.

First, the breadth of application is unprecedented. Previous technology waves had wide but ultimately bounded impacts. The internet transformed information exchange and commerce. Mobile transformed communication and location-based services. AI has the potential to augment almost every cognitive task performed in almost every business function. The scope is genuinely different.

Second, the pace of capability development is accelerating in a way that previous technology waves didn't. The internet developed quickly. But the capability curve for AI — the rate at which systems are becoming more capable — is steeper and shows fewer signs of plateauing. Businesses are in a position where the landscape is changing under their feet in real time, not just at product launch cycles.

Third, AI is for the first time creating a meaningful capability gap between businesses that adopt it well and those that don't at the level of everyday operational work. Previous technology waves largely created parity — when everyone has a website, having a website doesn't differentiate you. AI, done well, builds into a compounding advantage: better processes, better decisions, better products, delivered faster, at lower cost. That gap, once established, is hard to close.

What does this mean for business leaders today?

It means the strategic response can't be "wait and see." The businesses that wait for AI to mature before engaging will find themselves behind a curve that's already steep and getting steeper.

It also doesn't mean running at every AI opportunity simultaneously. The businesses I've seen succeed with major technology transitions are those that make deliberate, strategic choices about where to focus, build deep capability in those areas, and then expand from a position of genuine competence rather than scattered experimentation.

The question isn't whether to adopt AI. It's how to do it in a way that builds lasting capability — in your strategy, your technology, and most importantly, your people. Those three things together are what turn AI investment into business outcomes that actually show up in your results.

That's why I founded AiGILE. Not to sell technology, but to help businesses build the genuine AI capability that I've seen make the difference, over and over, for thirty years.

Frequently Asked Questions

Is AI really different from previous automation waves? Yes, in a meaningful way. Previous automation waves primarily replaced repetitive physical tasks (manufacturing) or highly structured cognitive tasks (data processing). AI can augment and in some cases replace judgment-intensive, unstructured cognitive work — writing, analysis, design, strategy support, and customer interaction. The scope of what can be affected is substantially broader than previous automation.

How long will it take for AI to fully transform most businesses? Based on historical technology adoption patterns, mainstream transformation of business operations will take 7–15 years. But the leading adopters will build substantial advantages within 2–5 years that will be very difficult for laggards to close. The question isn't about the end state — it's about where you want to be in the competitive landscape in three to five years.

What's the first thing a business leader should do about AI today? Understand where your business currently stands. Not with a gut feeling, but with a structured assessment across strategy, technology, and people dimensions. You can't make good decisions about where to invest without an honest baseline. The AiDOPTION Scorecard is designed to give you exactly that — a clear, specific picture of your current AI readiness and where the priority gaps are.

Not sure where your business stands with AI?

Find out your AiDOPTION Score — a free 10-minute diagnostic that measures your AI readiness across Strategy, Technology, and People. You'll get a personalised score and practical recommendations.

The AI Skills Gap Is Here. Is Your Team Ready?

Every significant technology transition creates a skills gap. The internet created demand for web developers, digital marketers, and e-commerce specialists that didn't exist a decade earlier. Mobile created demand for app developers and UX designers. AI is creating its own wave of new skill requirements — and unlike previous transitions, it's affecting almost every role across almost every function.

Every significant technology transition creates a skills gap. The internet created demand for web developers, digital marketers, and e-commerce specialists that didn't exist a decade earlier. Mobile created demand for app developers and UX designers. AI is creating its own wave of new skill requirements — and unlike previous transitions, it's affecting almost every role across almost every function.

For mid-sized businesses, the AI skills gap represents both a risk and an opportunity. The risk is being outpaced by competitors who invest in building AI capability earlier and more systematically. The opportunity is that businesses that invest in their people's AI skills now will compound that advantage over time.

What is the AI skills gap and why does it matter now?

The AI skills gap is the difference between the skills employees currently have and the skills they need to work effectively in an AI-enabled environment. It's not primarily about knowing how to build AI systems — that's a technical specialisation. It's about knowing how to use AI tools effectively in everyday work, understanding AI's capabilities and limitations, knowing how to evaluate AI outputs critically, and being able to adapt workflows to take advantage of AI assistance.

Research from McKinsey and BCG consistently shows that the biggest barrier to AI adoption isn't the technology — it's the people. A 2024 BCG survey found that more than 80% of organisations identified employee capability as one of their top two barriers to scaling AI. The technology is advancing faster than the workforce's ability to use it effectively.

The urgency is real. Businesses that wait for the AI skills question to resolve itself — hoping employees will pick up what they need organically — are accumulating a gap that becomes progressively harder to close.

What skills do employees actually need for AI?

AI skills fall into three levels, and not all employees need all three.

Foundational AI literacy applies to everyone. This means understanding what AI can and can't do, knowing which tools are available and appropriate for which tasks, being able to spot when AI output needs checking, and understanding the basic principles of responsible AI use (privacy, accuracy, attribution). This level of skill should be universal across the organisation.

Functional AI proficiency applies to anyone whose role involves significant use of AI tools. This means being able to write effective prompts to get high-quality outputs, use AI tools efficiently as part of day-to-day workflows, critically evaluate and edit AI-generated content, and apply AI appropriately to the specific demands of their function.

Advanced AI capability applies to a smaller group of people — analysts, developers, and process owners — who need to configure AI tools, design AI-enabled workflows, or work with AI in technically complex ways. This level typically requires more formal training and often a technical background.

How do you assess your team's current AI capability?

The starting point is an honest, structured capability assessment. This isn't just a survey asking whether people have used AI tools — it needs to assess actual proficiency across the dimensions that matter for your business.

A basic capability assessment covers: current AI tool usage (what, how often, for what purpose); self-assessed confidence in using AI effectively; understanding of AI's limitations and responsible use principles; and function-specific skill requirements. This should be done at the team level, not just individually, because the unit of deployment is usually a team workflow rather than an individual task.

The assessment should produce a clear picture of where your workforce currently sits across the three levels, where the priority gaps are relative to your AI strategy, and which teams or functions need the most investment to close those gaps.

What's the best approach to building AI skills in your workforce?

The single biggest mistake organisations make with AI skill-building is treating it as a one-off training exercise. A single workshop or e-learning module doesn't build lasting capability. Skill development that actually changes how people work requires a sustained, multi-modal approach.

Effective AI capability-building combines formal learning (structured training on specific tools and principles) with experiential learning (applying AI in real work tasks with coaching support), peer learning (AI champions and communities of practice within the organisation), and self-directed learning (access to resources for ongoing development). The mix should be designed around the specific needs and learning preferences of different groups, not as a one-size approach.

Critically, skill-building should be connected to the specific AI tools and workflows the organisation is adopting — not generic AI content. Learning is most effective when it's immediately applicable to real work. Employees who understand why they're learning something, and can use it the next day, develop skills far faster than those learning in the abstract.

Frequently Asked Questions

Do all employees need AI skills or just specific teams? At the foundational level — basic AI literacy and responsible use principles — yes, this should be universal. AI is increasingly present in the tools most employees use every day, whether or not those employees are consciously aware of it. Functional and advanced skills can be targeted to the roles and teams where they'll have the most impact. A phased approach, starting with high-impact functions and expanding over time, is practical for most mid-sized businesses.

Is "training" enough to close the AI skills gap? Training alone is not enough. Research consistently shows that skills learned in isolation don't transfer reliably to real-world application without reinforcement. Effective capability-building requires training, practice plus support. This is why we at AiGILE use the term "learning" rather than "training" — it signals a broader approach that goes beyond a one-off programme to build genuine, lasting capability.

How long does it take to build meaningful AI capability in a team? For foundational AI literacy, a well-designed programme can produce meaningful improvement in 4–6 weeks. For functional proficiency with specific tools, typically 8–12 weeks of supported practice is required to reach reliable competence. Advanced capability development is more variable, but typically 3–6 months of structured learning and application. The key variable is whether learning is connected to real work — theoretical learning alone takes longer and sticks less well.

Not sure where your business stands with AI?

Find out your AiDOPTION Score — a free 10-minute diagnostic that measures your AI readiness across Strategy, Technology, and People. You'll get a personalised score and practical recommendations.

Why Most AI Adoption Fails: The Change Management Problem Nobody Talks About

Most AI adoption conversations start with technology. Which platform? Which tools? How much compute? It's understandable — the technology is genuinely exciting, and it's the most visible part of the process. But it's rarely where AI adoption actually breaks down.

Most AI adoption conversations start with technology. Which platform? Which tools? How much compute? It's understandable — the technology is genuinely exciting, and it's the most visible part of the process. But it's rarely where AI adoption actually breaks down.

The real reason most mid-sized businesses don't see meaningful results from AI isn't the technology. It's the people. Specifically, it's the absence of a structured approach to managing the change that AI represents for their workforce.

Why do so many AI initiatives fail despite good technology?

The numbers are striking. McKinsey research consistently shows that around 70% of large-scale change programmes fail to meet their objectives — and AI adoption is no different. Organisations invest in tools, roll out access, and then wonder why usage is low, results are patchy, and teams are quietly reverting to old ways of working.

The answer is almost always the same: the technology was deployed, but the change wasn't managed. People weren't given the context to understand why AI was being introduced. They weren't given the skills to use it effectively. They weren't given the reassurance that their jobs were secure. And so they did what humans naturally do when faced with an uncertain change — they resisted it, quietly and persistently.

This isn't a failure of the technology. It's a failure of change management.

What is change management in the context of AI adoption?

Change management is the structured process of preparing, supporting, and guiding people through a significant organisational change. In the context of AI adoption, it means helping employees understand what AI means for their role, building the skills and confidence they need to work alongside it, and creating the conditions where AI becomes part of normal working practice — not a mandated tool that people tolerate.

Effective AI change management covers five interconnected areas:

Leadership alignment — ensuring leaders are clear on why AI is being adopted, how it connects to business strategy, and what their role is in driving the change. Without this, mixed messages cascade through the organisation.

Communication — timely, honest, and consistent communication about what's happening, why it's happening, and what it means for different teams. Silence is interpreted as threat. Clarity builds trust.

Capability building — providing training and learning support that meets people where they are. Not a one-off workshop, but ongoing development that builds real fluency over time.

Support structures — helpdesks, AI champions, peer networks, and feedback mechanisms that give people somewhere to go when they're stuck or uncertain.

Measurement and adjustment — tracking adoption, monitoring sentiment, and actively adjusting the approach based on what's working and what isn't.

How do you build a change management plan for AI adoption?

The starting point is stakeholder analysis — mapping who is affected by the AI adoption, how significantly, and what their primary concerns are likely to be. The experience of a finance analyst whose workflow is being automated is very different from a customer service manager whose team is getting an AI co-pilot. Change management that treats everyone the same will resonate with no one.

From there, a communication plan needs to be developed. This should define who says what, to whom, at what stage of the adoption journey. Gartner research on technology adoption highlights that the timing of communications matters as much as the content — too early and people fixate on uncertainty, too late and they feel blindsided.

Training and capability-building then needs to be sequenced to follow communication, not precede it. People learn better when they understand the "why" before they tackle the "how." Learning programmes should be varied, practical, and role-specific, combining formal training with on-the-job support.

Finally, a feedback mechanism needs to be built in from day one. Pulse surveys, manager check-ins, and usage data all provide signals about how the adoption is landing and where additional support is needed.

What does successful AI change management look like in practice?

Businesses that manage AI change well share a few common characteristics. Leaders are visible and positive about the transition — not just in written communications, but in how they talk about AI in meetings and how they model its use. Communication is honest about both the opportunities and the challenges, rather than relentlessly optimistic in a way that erodes trust.

Learning is treated as ongoing rather than one-off. Staff have access to support when they need it, not just at the moment of go-live. And there's a clear measurement framework in place so the organisation knows whether adoption is actually happening — and can intervene if it isn't.

Most importantly, successful AI change management starts early. By the time a new AI tool is ready to deploy, the change management groundwork should already be well underway. Retrofitting change management to a failed rollout is considerably harder than building it in from the start.

Frequently Asked Questions

How long does change management take during AI adoption? There's no single answer — it depends on the scale of the change, the size of the organisation, and the existing change maturity. A focused AI tool rollout in one department might require 6–8 weeks of structured change support. A whole-of-business AI adoption programme could run for 12–18 months. As a rule of thumb, if the timeline feels too short for the change management component, it probably is.

Can a mid-sized business handle change management internally? Yes, with the right support. Internal HR and communications teams often have much of the capability needed. Where specialist help adds the most value is in the design phase — building the initial framework, stakeholder mapping, and communication strategy — and in specific skills like capability assessment and facilitation of leadership alignment sessions.

What's the biggest sign that change management is being neglected? Low adoption rates despite high access to tools. If you've rolled out an AI platform and only 20–30% of users are actively using it after several weeks, the technology isn't the problem. The experience gap — the distance between what the tool can do and what people feel confident doing with it — is the problem. That's a change management challenge.

Not sure where your business stands with AI?

Find out your AiDOPTION Score — a free 10-minute diagnostic that measures your AI readiness across Strategy, Technology, and People. You'll get a personalised score and practical recommendations.